Build your CI/CD with AWS CodePipeline and Elastic Beanstalk

Posted By

Aashish Chetwani

DevOps is fast becoming a standard right now and CI/CD (Continuous Integration and Continuous Delivery/Deployment) is the term often associated with development and release of a software in the DevOps process. In simple terms, CI (Continuous Integration) is a process that allows developers to integrate their code in the main shared repository, multiple times a day and providing them with instant feedback about possible broken functionality or tests. Instead of building and integrating software work products from different teams/people at the end of the development cycle, with Continuous Integration you can build it at regular intervals. This helps developers to meet the code quality standards, resolve bugs early and reduces integration cost. Continuous Delivery is an extension of Continuous Integration that helps you focus on automating the delivery process of software development which helps in deploying, staging, and production at any time. Continuous Deployment is a process which automatically builds/deploys the code on the servers. The process can be fully automated which will build, test, and deploy in multiple environments. It will automatically handle any build failures and revert back to the previous good state.

There are several tools in the market which can help you build a CI/CD pipeline capable enough to answer all your agile and continuous build, deploy, delivery requirements. Jenkins, GoCD, Travis CI, GitLab, Bamboo are some of the names which come to my mind immediately when someone says CI/CD. But in this blog, I am going to explain how you can build a complete CI/CD with the help of AWS Developers tools. With AWS you have multiple tools such as AWS CodePipeline, AWS CodeBuild, AWS CodeDeploy, and Amazon EC2 to build your continuous pipeline. I am going to explain this by setting up a CI/CD pipeline using AWS CodePipeline and AWS Elastic Beanstalk service. So, let’s have a look at how you can do this step-by-step:

Step 1: Create a deployment environment

In continuous deployment, you need a deployment environment which can be either an EC2 server or a Docker container or Elastic Beanstalk (which can handle the environment configuration and bootstrapping automatically). In this case, I am going to use Elastic Beanstalk.

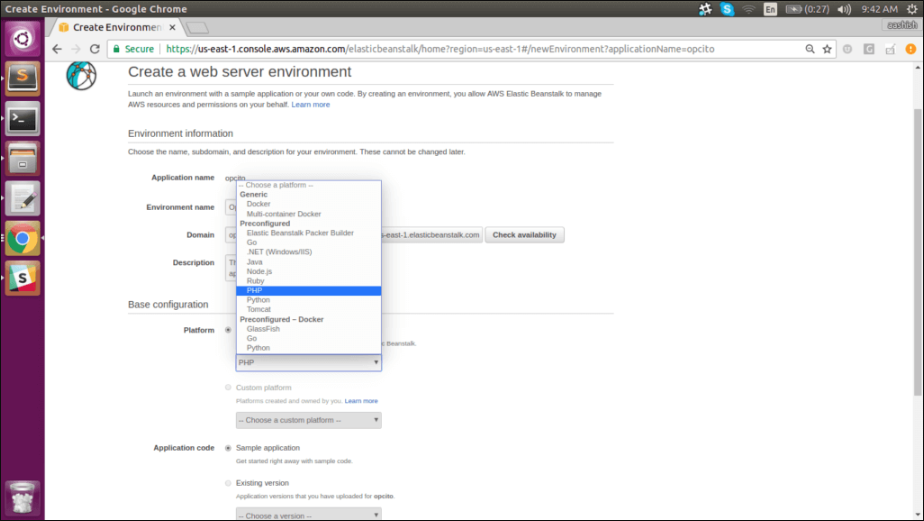

a. Elastic Beanstalk will host web application without the need to launch, configure, or operate. You don’t need to worry about the infrastructure, Elastic Beanstalk will take care of everything. I am going to deploy a PHP application on Elastic Beanstalk. So, let’s create an environment for PHP application with a single instance -

b. Select PHP as a platform and other environment parameters like single instance, vpc, security group and keys in the advanced options.

c. Once you have the environment, you can see the health check status and check the configurations of the environment. It will show the PHP configuration items you’ve selected during setup.

Step 2: Clone the code from GitHub repository

I have a sample PHP application which I am going to deploy on Elastic Beanstalk and along with that, I will configure CodePipeline. This pipeline will take the source code from your GitHub repository and perform actions on it. If there is any change in the source code it will directly deploy that change to servers.

Here, you have three options for source code viz., GitHub repository, Amazon S3 or AWS CodeCommit repository. You can select any one out of the three. For this particular case, I am going to use GitHub.

Step 3: Create a CodePipeline

Create a CodePipeline, which will be used to build, test, and deploy your code every time there is a code change based on release configuration which you have defined. This enables you to rapidly deliver your features and updates to deploy server.

a. Go to CodePipeline service, click on “create pipeline” and name it accordingly.

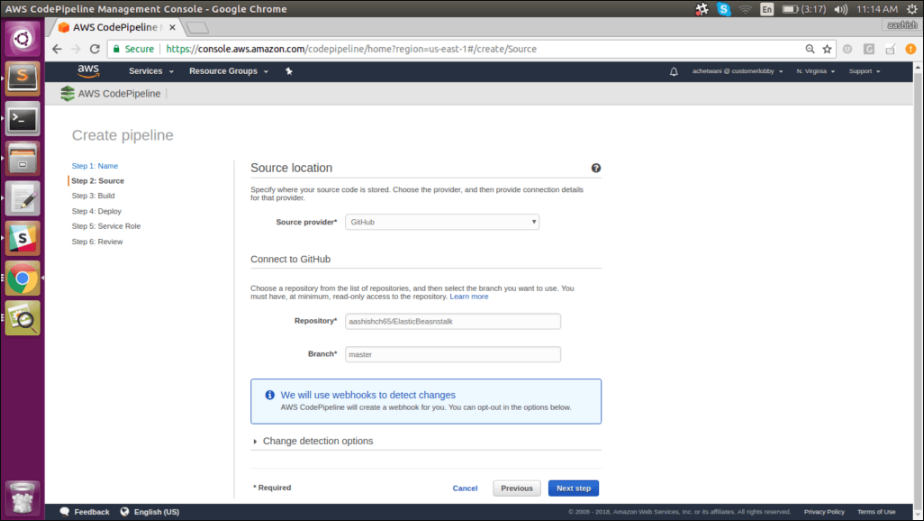

b. Select the source provider (from where you want to deploy the source code); there are multiple options like GitHub, Amazon S3, and AWS CodeCommit. Select GitHub as the source provider and, authorize the application, specify the branch and the repository.

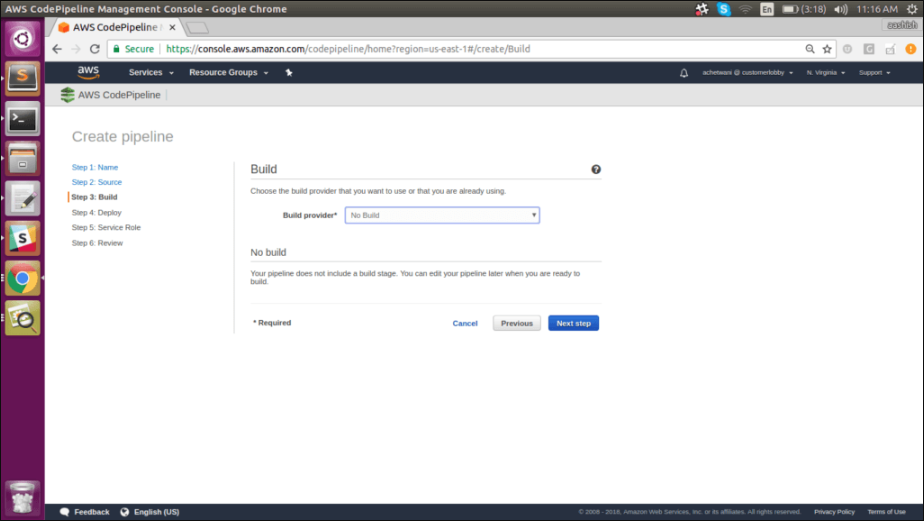

c. Next step is to build the code. In a complete CI/CD process, there should be a proper build process that can build a package. Once the build process is completed, then we have the whole deploy process. But in our case, we are using a simple PHP application we won’t need any build process. So select “Nobuild” as the build provider from the drop-down menu. If you do need a build step, however, you have multiple options like Jenkins, CodeBuild, Solano CI, etc.

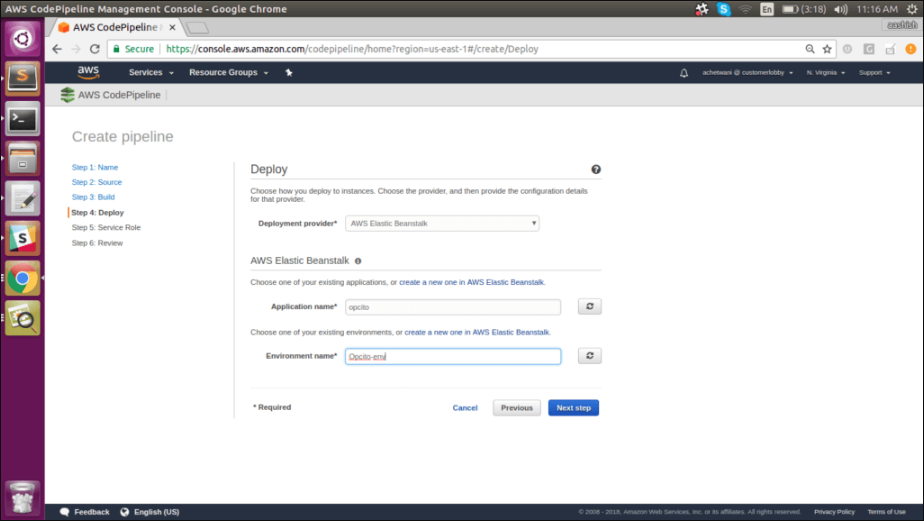

d. Select the deployment provider. In our case, it will be Amazon Elastic Beanstalk. Name the application and the environment accordingly.

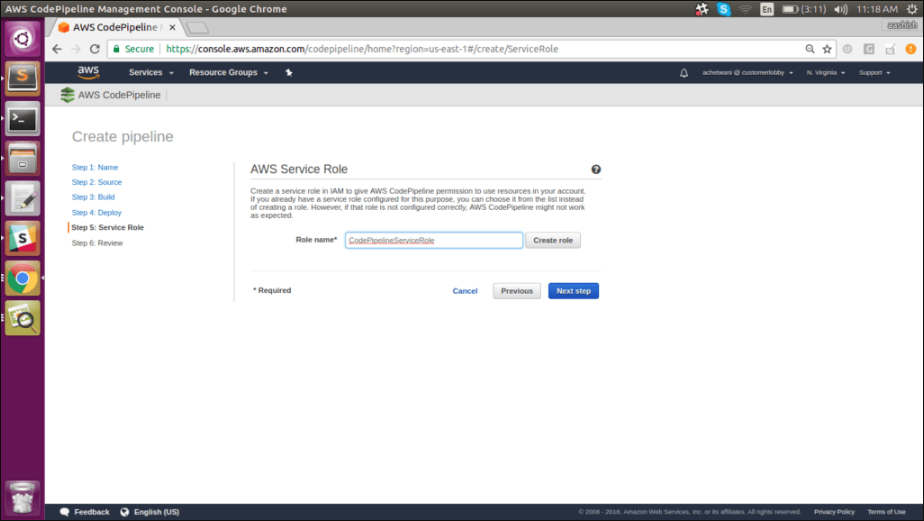

e. Select the service role. If a Service role is not present, then create a new role and click on “next” and then click on “create pipeline”.

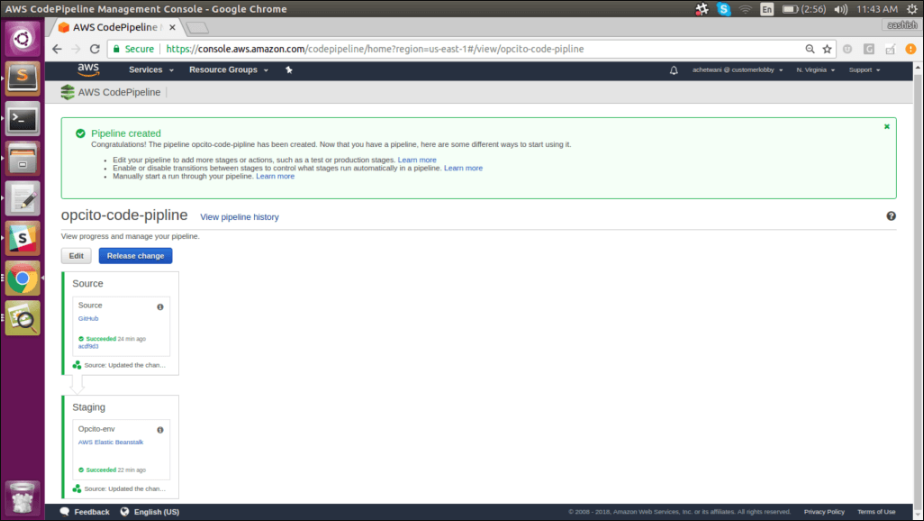

f. After your pipeline is created, the pipeline status page will appear. And the pipeline will automatically start to run. You can see the progress as well as success and failure messages as the pipeline performs each action.

To make sure your pipeline is running successfully, monitor the progress of the pipeline as it moves through each stage. The status of each stage will change from “No executions yet” to “In Progress” and then to either “Succeeded” or “Failed”. The pipeline should complete the first run within a few minutes.

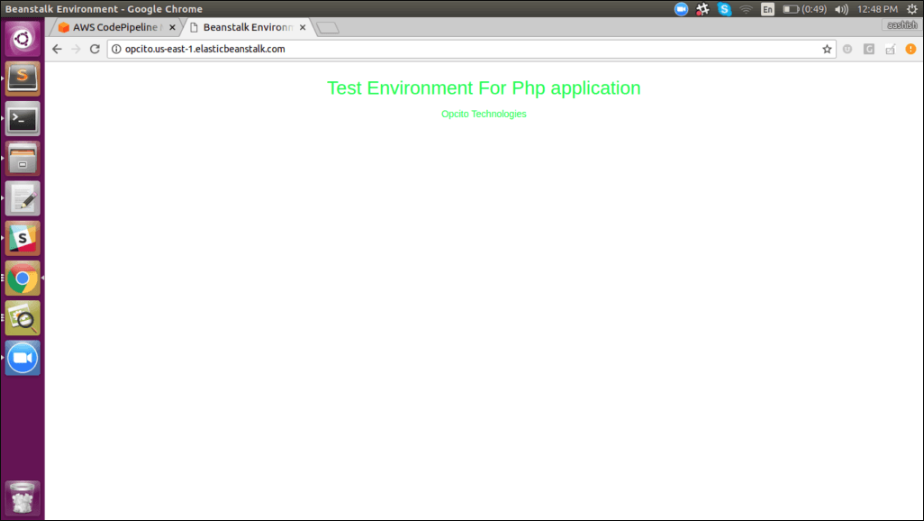

g. Open the Beanstalk URL, and you will be able to see the updated code.

Step 4: Commit the changes and update your app

You can revise your code and commit the changes to the repository. CodePipeline will detect your updated sample code and then automatically initiate deploying it to your EC2 instance via Elastic Beanstalk.

Let’s update your index.html page, commit changes, and after some time, you will see the pipeline being updated. It will automatically pull the updated code and will start reflecting the changes.

This is it; your complete CI/CD pipeline is ready.

I have used AWS CodePipeline, GitHub, and Elastic Beanstalk to build this pipeline. There are multiple ways to create a pipeline. You can try your hands at various other options such as CodeBuild, Jenkins, CodeCommit, etc.

So, now the question is - which option should you go with? According to the latest reports, the big three still lead more than 55% of the cloud market, and AWS is still the biggest player with more than 32% market share. So, it makes perfect sense to go with CodePipeline if you are already on the AWS cloud. Limited to the AWS cloud, CodePipeline is fairly simple to use and can get along easily with your existing AWS cloud, AWS tools, and AWS ecosystem. Plus, there are added advantages like Amazon’s security and Amazon’s IAM controls. But, its simplicity is the factor that I think will help it gain more market in near future. It is so simple to use that even a newbie can set a CodePipeline up and running in a matter of hours. If you are already using Jenkins and are wondering why you should switch to a new tool? If Jenkins is serving your needs at the moment, then I have good news for you. You can combine Jenkins and CodePipeline with an option to keep your build logic in Jenkins. So, what are you waiting for? Try your hands on AWS CodePipeline, and let me know what you think.

Related Blogs